Commuter

Commuter

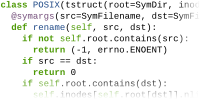

Model your software interface using a symbolic variant of Python.

Test your code

automatically to find cases of

unnecessary, non-

Improve your software's scalability by eliminating unnecessary sharing.

Sometimes it's bad to share with others. On modern massive multicores, shared cache lines can severely limit your software's ability to scale. But sharing is often necessary to make software do what it does.

Commuter is an automated scalability testing tool that hunts down unnecessary sharing in your code. Unlike traditional scalability testing, Commuter doesn't require handcrafted workloads or even multicore hardware. Starting with a high-level software interface model written in Python – not some esoteric specification language – Commuter automatically generates thousands of test cases for your interface and tests your implementation to root out the exact causes and locations of unnecessary, scalability-limiting sharing.

Publications

At the heart of Commuter is the scalable commutativity rule: whenever interface operations commute, they have a conflict-free implementation. This is how Commuter knows the difference between necessary and unnecessary sharing. You can find all of the details in our SOSP 2013 paper below, including a full formal treatment of the rule, a detailed explanation of Commuter, and how we applied the rule and Commuter to build a POSIX file system and virtual memory system.

Lillian Tsai, Eddie Kohler, M. Frans Kaashoek, and Nickolai Zeldovich. September, 2018.

This paper explains a flaw in the published proof of the Scalable Commutativity Rule (SCR), presents a revised and formally verified proof of the SCR in the Coq proof assistant, and discusses the insights and open questions raised from our experience proving the SCR.

Austin T. Clements. Ph.D. thesis, Massachusetts Institute of Technology, June 2014.

What fundamental opportunities for multicore scalability are latent in software interfaces, such as system call APIs? Can scalability opportunities be identified even before any implementation exists, simply by considering interface specifications? To answer these questions this dissertation introduces the scalable commutativity rule: Whenever interface operations commute, they can be implemented in a way that scales. This rule aids developers in building scalable multicore software starting with interface design and carrying on through implementation, testing, and evaluation.

This dissertation formalizes the scalable commutativity rule and defines a novel form of commutativity named SIM commutativity that makes it possible to fruitfully apply the rule to complex and highly stateful software interfaces.

To help developers apply the rule, this dissertation introduces an automated method embodied in a new tool named Commuter, which accepts high-level interface models, generates tests of operations that commute and hence could scale, and uses these tests to systematically evaluate the scalability of implementations. We apply Commuter to a model of 18 POSIX file and virtual memory system operations. Using the resulting 26,238 scalability tests, Commuter systematically pinpoints many problems in the Linux kernel that past work has observed to limit application scalability and identifies previously unknown bottlenecks that may be triggered by future hardware or workloads.

Finally, this dissertation applies the scalable commutativity rule and Commuter to the design and implementation of a new POSIX-like operating system named sv6. sv6's novel file and virtual memory system designs enable it to scale for 99% of the tests generated by Commuter. These results translate to linear scalability on an 80-core x86 machine for applications built on sv6's commutative operations.

Austin T. Clements, M. Frans Kaashoek, Nickolai Zeldovich, Robert T. Morris, and Eddie Kohler. SOSP 2013.

What fundamental opportunities for scalability are latent in interfaces, such as system call APIs? Can scalability opportunities be identified even before any implementation exists, simply by considering interface specifications? To answer these questions this paper introduces the following rule: Whenever interface operations commute, they can be implemented in a way that scales. This rule aids developers in building more scalable software starting from interface design and carrying on through implementation, testing, and evaluation.

To help developers apply the rule, a new tool named Commuter accepts high-level interface models and generates tests of operations that commute and hence could scale. Using these tests, Commuter can evaluate the scalability of an implementation. We apply Commuter to 18 POSIX calls and use the results to guide the implementation of a new research operating system kernel called sv6. Linux scales for 68% of the 13,664 tests generated by Commuter for these calls, and Commuter finds many problems that have been observed to limit application scalability. sv6 scales for 99% of the tests.

Austin T. Clements, M. Frans Kaashoek, and Nickolai Zeldovich. EuroSys 2013.

RadixVM is a new virtual memory system design that enables fully concurrent operations on shared address spaces for multithreaded processes on cache-coherent multicore computers. Today, most operating systems serialize operations such as mmap and munmap, which forces application developers to split their multithreaded applications into multiprocess applications, hoard memory to avoid the overhead of returning it, and so on. RadixVM removes this burden from application developers by ensuring that address space operations on non-overlapping memory regions scale perfectly. It does so by combining three techniques: 1) it organizes metadata in a radix tree instead of a balanced tree to avoid unnecessary cache line movement; 2) it uses a novel memory-efficient distributed reference counting scheme; and 3) it uses a new scheme to target remote TLB shootdowns and to often avoid them altogether. Experiments on an 80 core machine show that RadixVM achieves perfect scalability for non-overlapping regions: if several threads mmap or munmap pages in parallel, they can run completely independently and induce no cache coherence traffic.

Downloads

Commuter's source code is available on GitHub. You can browse our POSIX model and its test code generator online to get a feel for how Commuter models work.

The source code for sv6, our multicore research kernel built with the help of Commuter, is also available on GitHub.

Commuter depends on several related pieces of software. These are detailed in the build directions, but here's a quick overview: Z3 from Microsoft Research, mtrace, libelfin, and linux-mtrace.

The test code, model file, and sharing reports for Linux and sv6 from our SOSP 2013 paper are also available to download.

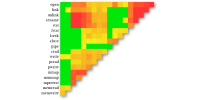

Demo

Below is an interactive browser for the test data for Linux and sv6 from our SOSP 2013 paper. Click around to filter and expand the data.

Contact

Questions? Comments? Email